Delve and Crunchyroll Expose the Same Problem

Delve and Crunchyroll look like very different stories. One is about alleged compliance fraud. The other is about a third-party breach. But for security and risk leaders, they point to the same underlying issue: most TPRM programs are built to collect evidence, not verify trust. That distinction matters more than ever, because it helps explain why so many organizations can follow the process, review the documentation, and still get blindsided.

The Delve story centers on a fast-growing compliance startup accused of generating near-identical SOC 2 reports for hundreds of clients, with auditor conclusions allegedly written before any independent review took place. Delve disputes the most serious allegations. If true, the story is not just about one company’s misconduct. It is about how easily a system built around polished evidence can be manipulated when the primary goal is approval.

The Crunchyroll story reveals the other side of the same design flaw. A hacker compromised an employee at Telus Digital, a third-party vendor handling customer support for the Sony-owned streaming platform, exposing data tied to 6.8 million users. Crunchyroll’s internal security was not the central issue. The problem was that vendor risk emerged through a third party after the point when the company had likely already done its diligence. In other words, the process may have worked exactly as designed, and still failed to catch the risk that mattered.

Put together, these cases show two predictable weaknesses in the traditional model.

- First, it can be fooled by evidence that looks credible but is not.

- Second, it has limited ability to detect meaningful risk that develops after an assessment is complete.

Delve highlights the first problem. Crunchyroll highlights the second. Both show why the old model is becoming harder to defend.

The Real Problem Is Not Execution. It Is Design.

Traditional TPRM starts from a reasonable premise: before a vendor gets access to sensitive systems or sensitive data, they should demonstrate that they are secure. That basic idea still makes sense. The problem is that over time, the market evolved around proving readiness for approval, not providing durable assurance. What began as a security mechanism gradually became, in many cases, a process mechanism.

That shift changed vendor behavior.

Vendors learned that they are often rewarded less for being more secure than for being easier to approve.

Over time, that changed the role of documentation itself. SOC 2 reports, questionnaires, and certifications did not stop being useful inputs, but they increasingly became part of the sales motion as much as the security motion. The result is a system where evidence is often optimized to reduce friction, not necessarily to provide the clearest possible picture of risk.

That is what makes the Delve story so significant. If the allegations are true, Delve did not invent a new problem. It exposed an extreme version of a dynamic the market had already accepted: evidence shaped for approval can still be treated as evidence of trust. The scale and brazenness of the allegations are what made the story newsworthy. The underlying incentive structure has been visible for years.

Even when the documentation is legitimate, the model still has a second structural flaw: it goes stale quickly. Most TPRM programs remain heavily dependent on point-in-time assessments. A vendor is reviewed, approved, and onboarded, and then the organization relies on periodic reassessment to maintain confidence. But vendor risk does not change on an annual review cycle. It changes continuously, often in ways that are hard to see if your program only becomes active at onboarding and renewal.

- Teams change.

- Devices get compromised.

- Subprocessors are added.

- Controls drift.

- Exposure expands.

A vendor may pass the process and still become your problem months later. That is what makes the Crunchyroll case so important. Even if the original diligence was thorough, it would not necessarily have told the company anything about a malware infection on a support agent’s device at a later point in time. That is not simply a gap in execution. It is a flaw in how the model is built.

Why This Matters to Executives

Executives do not need another reminder that third-party risk is real. What they need is a clearer explanation of why so many programs still produce false confidence. That false confidence comes from mistaking activity for assurance. A team can collect documents, review responses, complete the assessment, and satisfy the process, yet still lack meaningful visibility into whether a vendor is actually trustworthy or whether that trust still holds today.

That creates risk at the leadership level because it can make a program look more effective than it actually is.

When diligence is measured by workflow completion rather than by verified understanding, organizations can end up with a very strong record of process execution and a much weaker grip on live vendor exposure. From a governance standpoint, that is a serious problem. It means leaders may be relying on indicators that reflect program activity more than actual resilience.

It also creates a market distortion that affects vendors. Companies that make real investments in security, transparency, and operational maturity often have to compete in the same process as vendors that are simply better at packaging their answers. When polished documentation and trustworthy evidence are treated as roughly equivalent, the system sends the wrong signal to the market. It rewards presentation in places where it should be rewarding substance.

That is why this is not just a security operations issue. It is a leadership issue. It affects how organizations allocate trust, how they evaluate exposure, and how they interpret the health of a critical control function. If the model produces confidence more reliably than it produces clarity, executives should question whether the model is still serving its purpose.

Three Shifts for Stronger TPRM

If the problem is structural, the fix cannot be cosmetic. The answer is not just a slightly better questionnaire, a more efficient intake workflow, or a faster way to collect the same artifacts. Those improvements may reduce friction, but they do not address the core design problem.

A better model has to change what the program is trying to achieve and how it gets there.

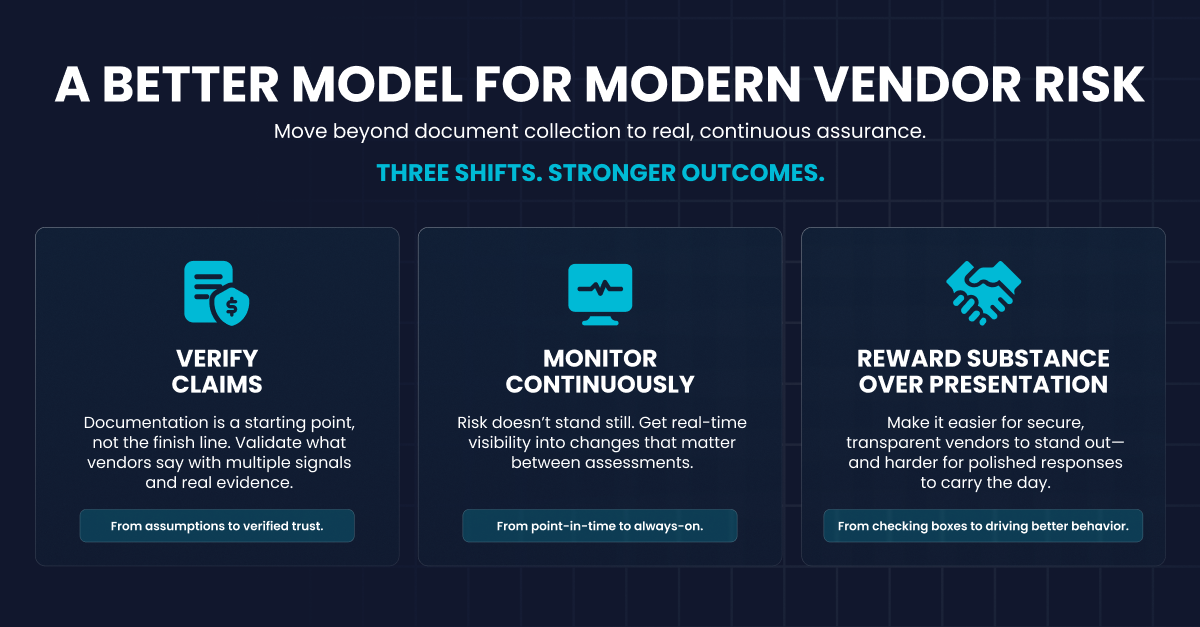

1. The first shift is moving from collecting claims to verifying claims.

Documentation should still matter, but it should be treated as the starting point, not the conclusion. A SOC 2 report, a questionnaire, or a certification may offer useful signals, but none of them should be mistaken for proof on their own. Stronger programs are built to test whether the story holds together across controls, architecture, operational context, attestations, and other available signals. The key question is not whether the vendor submitted evidence. It is whether the evidence deserves to be trusted.

2. The second shift is moving from point-in-time assessment to continuous visibility.

Periodic reviews are better than no reviews, but they leave obvious blind spots between checkpoints. Vendor risk evolves continuously, which means programs need ways to surface meaningful changes as they happen, not just after the fact. If an organization only learns about material changes when the next assessment cycle begins, it is reacting to stale conditions rather than managing live risk.

3. The third shift is creating a model that better rewards substance.

A healthy market should make it easier for genuinely secure, transparent vendors to distinguish themselves. It should not force them into the same pattern of response optimization as vendors that are primarily focused on clearing hurdles. If the easiest route to approval is polished paperwork, the market will continue producing more polished paperwork. Better TPRM should make it easier to recognize real investment in security and harder for presentation alone to carry the day.

Where Whistic Fits In

Whistic is part of this category, so we are not standing outside the problem pretending to diagnose it from a distance. But we do believe the market has outgrown the old model.

Document collection is not the same as verification. Periodic assessment is not the same as visibility. Workflow efficiency is not the same as stronger risk outcomes.

That does not mean the category changes overnight, and it does not mean every part of the old model disappears. Documentation still has value. Assessments still have value. But they are no longer enough by themselves, and pretending otherwise is becoming harder each time a story like this breaks into public view.

That is why these incidents matter beyond the companies involved. They illustrate, in very public ways, what many teams have already felt privately: the traditional model can create the appearance of assurance without delivering enough of the substance. Once that becomes clear, the real challenge is not just improving the workflow. It is redesigning the program.

The Question Leaders Should Be Asking

The most important question raised by Delve and Crunchyroll is not whether those companies made mistakes. It is whether your own program is built to catch the kinds of failures that traditional assessments routinely miss. That is the more uncomfortable question, because it shifts the focus away from isolated incidents and back onto the assumptions built into the program itself.

If your TPRM model is still centered on document collection and periodic review, then these stories should be read as a warning. Not because every vendor is untrustworthy, and not because every assessment is ineffective, but because the underlying design leaves organizations exposed in ways that are increasingly difficult to justify. It leaves too much room for unreliable evidence to pass as assurance and too little visibility into how vendor risk changes over time.

That is the real takeaway for executives. The issue is no longer whether the process was followed. The issue is whether the process is capable of producing the level of trust and visibility modern third-party risk actually requires. If the answer is no, then the work ahead is not about tightening the same model. It is about moving to a better one.